While looking for something else, I happened on the National Society of Professional Engineers (NSPE) Rules of Practice regarding engineering communications. Here’s what they say about the ethics of technical writing.

Engineers shall be objective and truthful in professional reports, statements, or testimony. They shall include all relevant and pertinent information in such reports, statements, or testimony, which should bear the date indicating when it was current.

If you want your writing to be objective, start by doing good science. That is, follow the scientific method. Start with a hypothesis, not a conclusion or an agenda. Design an investigation to test your hypothesis. And build in ways to check your data. That may mean comparing your findings with what others have reported in the literature. If possible, use multiple test methods so the results from one method overlap or shed light on the results from the others. Then base your conclusions on the data.

Throughout the investigation and the reporting, never cross the line between science and advocacy. You are not selling a product or trying to win the case for your client. You are there to determine and report the facts as dispassionately as you can. This is how you best promote public health, safety, and welfare in your role as an engineer.

Make sure you’re clear about who the authors are. That’s important for your reader to know, and it ensures that you give credit where it’s due.

The purpose of technical writing is to convey scientific information clearly and accurately. It should be both objective and impersonal in style. It should appeal to the intellect, not the emotions or imagination, of the reader. And it should rely heavily on facts and data.

The ethics of technical writing in practice

How you organize the information can highlight some things and obscure others. Often engineers default to a chronological narrative, even when the time order of events isn’t important.

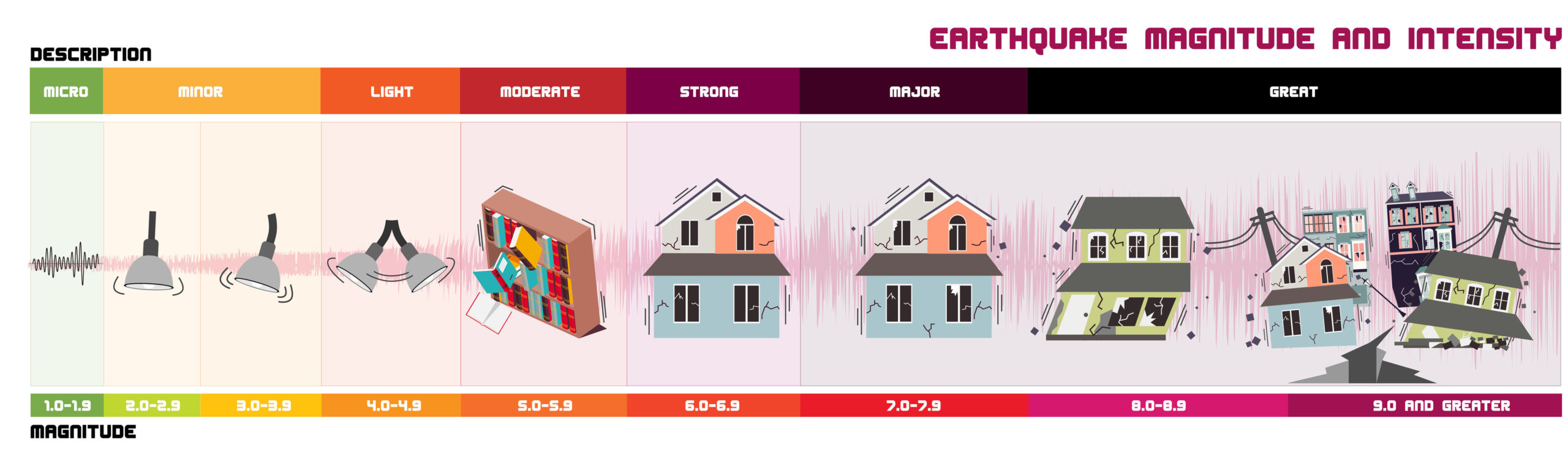

For example, as editor of a journal I received a manuscript from structural engineers who had examined buildings that had survived a severe earthquake. Their manuscript read like a disaster travel log, complete with photos of the authors in front of what remained of local landmarks. If only they had considered why they were there! The point of examining post-earthquake damage is to learn what worked and what didn’t so that we can improve our building codes. That chronological narrative of their observations made it difficult to extract the relevant information.

If they had organized the discussion spatially, by distance from the epicenter, it would have been easy for the reader to compare performance under equally severe shaking. If they had organized according to which version of the local building code was in force at time of construction, they would have emphasized the relative effectiveness of code provisions. Either method would have been more useful than a chronology, but the latter would have directly addressed their mission.

Similarly, how you present information graphically or in tabular form may either highlight or obscure patterns in the data. You can plot any two quantities in an x-y plot or juxtapose any assortment of data in a table, but they don’t mean anything unless they’re somehow related. You may need to experiment with how you graph or tabulate your data to see what patterns emerge.

And remember, correlation is not causality. If A and B occur together, A may have caused B, B may have caused A, both could be due to another cause, C, or they may simply be coincidental. Don’t assume or imply causality.

What information is relevant?

Standardized tests have protocols for reporting that tell you what to include. If you’ve designed your own experimental program you’ll have to figure out what matters. Erring on the side of more detail will give you more “tracks” to follow in case of unexpected results. Having a form to fill out will remind you to collect your data consistently. If you later find that a certain factor isn’t important, you can either leave it out or show that it didn’t affect the results.

What about erroneous data and outliers? If you can identify a specific error in the testing, you should omit the resulting data. There are statistical tests to identify outliers, but some test methods inherently have a wide scatter. When in doubt, include it. Whenever you discard data, be sure to report what you omitted and why.

It’s also important to include all the relevant references in your introduction and in evaluating your results. Include the dates of publication or, in the case of a website, the date you accessed it. As new information becomes available, websites need to be updated to keep up.

You may need to omit some things to stay within the page limit for publication. However, don’t “cherry pick” the papers that support your desired conclusion while ignoring those that don’t. The conclusion should be the end, not the beginning, of your investigation.

Lastly, the conclusion should flow naturally and logically from everything you’ve presented before it. There’s no place for surprise endings in technical communication.